Sample Program - Word Count

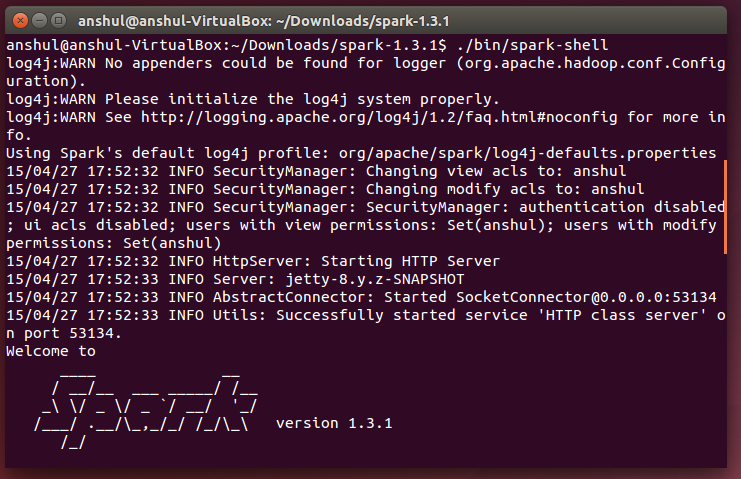

- Start Spark Shell -

- cd to spark extracted folder

- Run the following command ---->> $ ./bin/spark-shell

- It will start the spark shell.

Writing Word Count Program

Following the commands below :

- val f = sc.textFile("README.md")

- val wc = f.flatMap(l => l.split(" ")).map(word => (word, 1)).reduceByKey(_ + _)

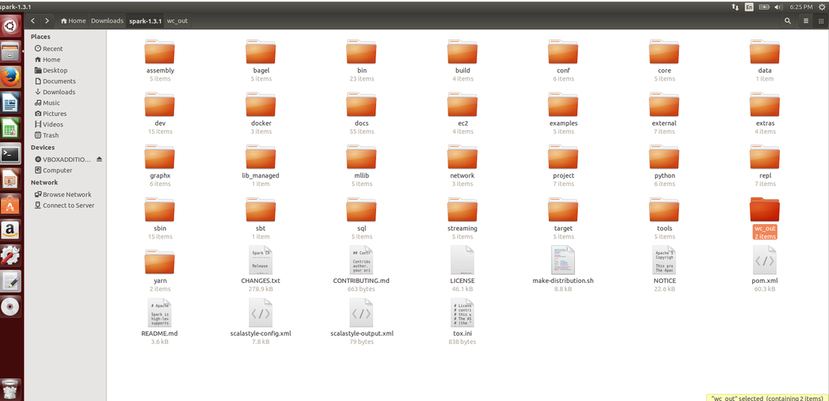

- wc.saveAsTextFile("wc_out")

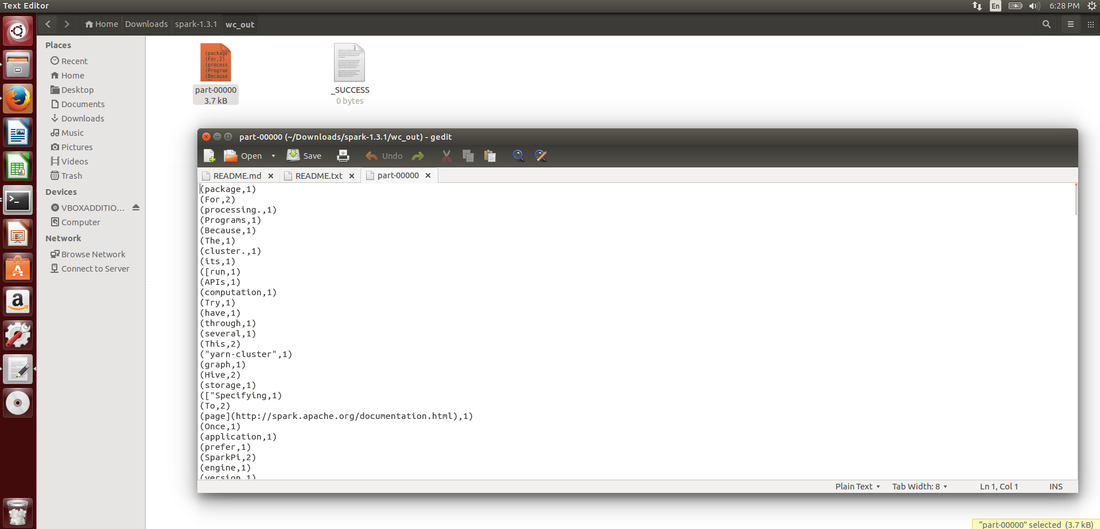

Go inside the wc_out folder and check the partition file - :

So our Apache Spark is working and we are all set for working more on apache spark.

Thank You :)

Anshul Shrivastava

Thank You :)

Anshul Shrivastava